Project

BRAM

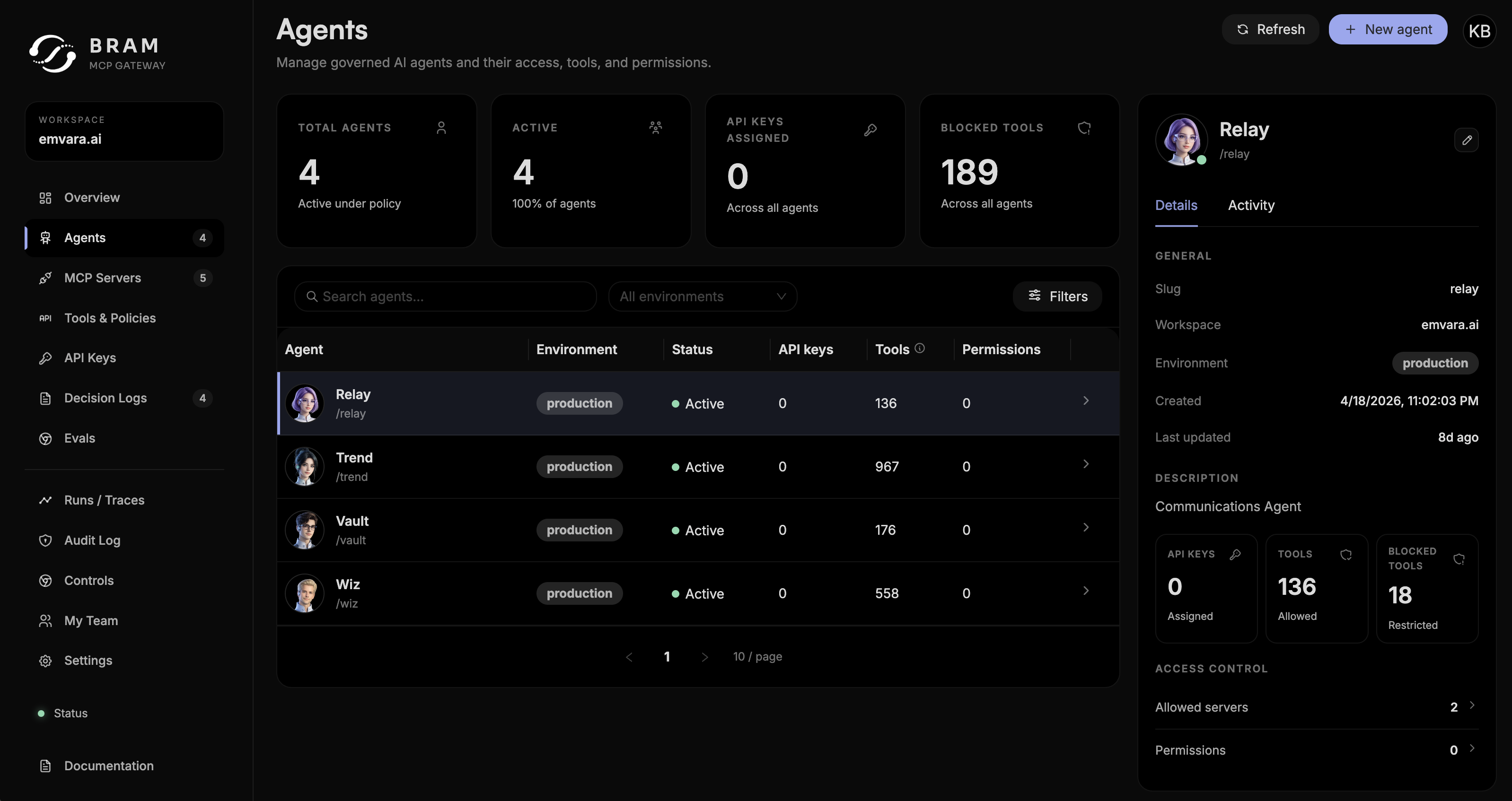

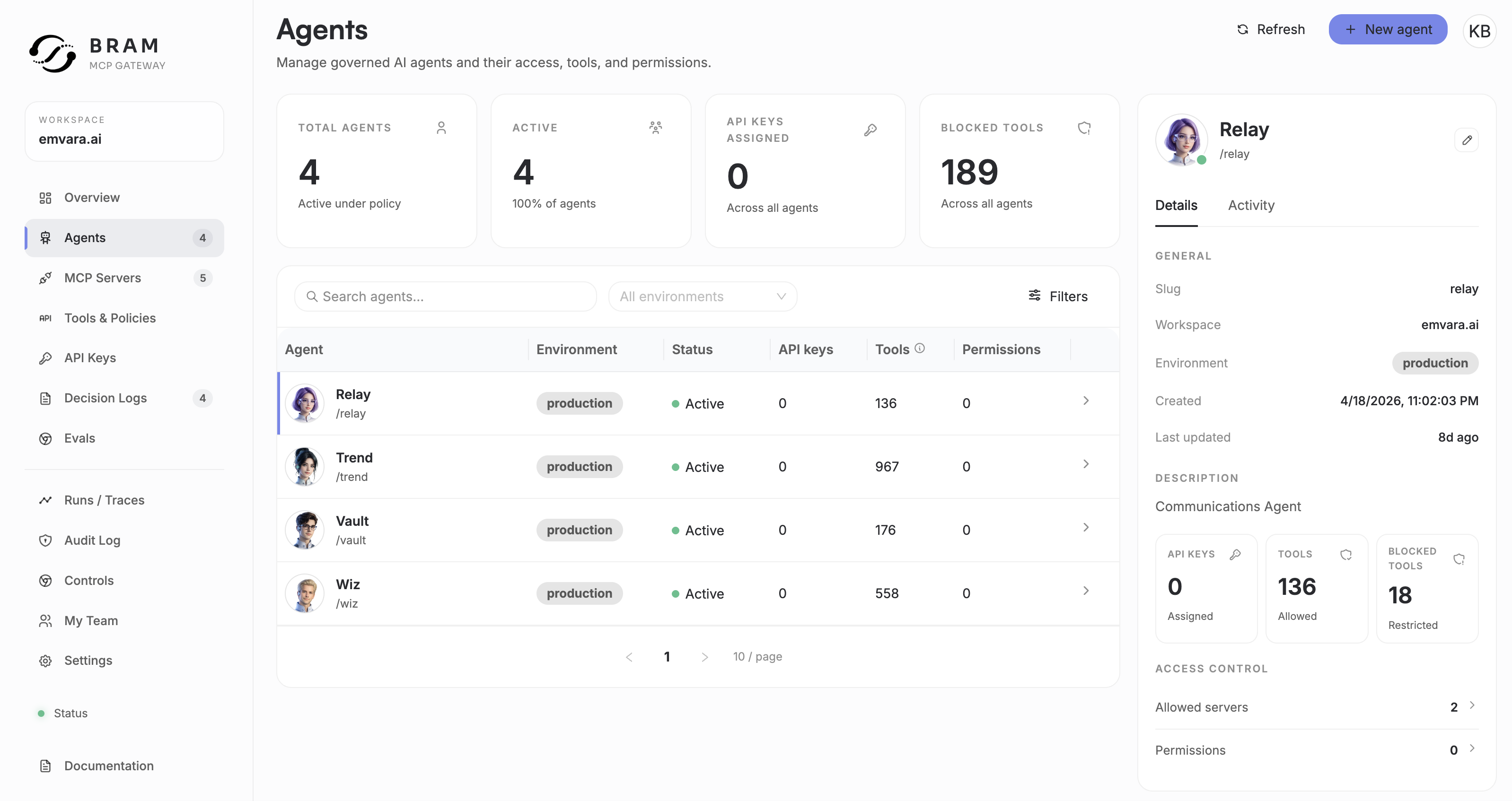

BRAM is an MCP gateway for production AI agents, built to observe every agent-tool call, enforce policies centrally, and run inline guardrails and post-hoc evaluations across connected MCP servers.

Problem

Building Uptivus and Humagician made one thing obvious: once AI agents stop being demos and start touching real systems, the hard part is no longer just giving them tools. The hard part is knowing what they did, deciding what they are allowed to do, and evaluating whether their actions were actually safe and useful.

Uptivus created a need for AI workflows that could inspect incidents, reason across monitoring data, and connect to alerting and automation systems. Humagician pushed that further with agentic inbound automation: chat, forms, support workflows, knowledge bases, and task execution across customer-facing channels.

Both products needed agents that could connect to external capabilities through MCP servers. But direct agent-to-server connections create a control problem:

- every agent can become its own security boundary

- tool approvals and access rules are scattered across applications

- audit history is hard to search, replay, or export

- prompt injection and data leakage risks sit directly on the request path

- evaluations often happen after the fact, if they happen at all

BRAM grew out of that need for a shared gateway layer between AI agents and the MCP servers they call.

Implementation

BRAM is being built as an MCP gateway for production AI agents. It sits between agents and tools, giving teams one control plane for policy, observability, guardrails, and evaluations without requiring agent code changes.

The public product model is intentionally direct: point agents at BRAM Gateway instead of individual MCP servers, keep the agent workflow intact, and let the gateway inspect, govern, and route every call. The gateway speaks native MCP, can be configured to fail open or fail closed, and supports cloud, VPC, and on-prem deployment paths.

Core platform capabilities include:

- Policy enforcement for deciding what every agent can do centrally

- Allow, deny, and approval modes for tool calls that need human sign-off

- Identity-aware controls so policies can vary by agent, tool, team, or workspace

- Observability on every call with searchable traces, decisions, and audit records

- Inline guardrails that run on the live gateway request path

- Post-hoc governance evaluations against captured traces and curated datasets

- Versioned policy changes that can be rolled forward or back safely

- Audit exports for teams that need a durable record of agent behavior

The point is to make MCP usable in production without turning every product into its own governance project.

Why It Exists

Uptivus and Humagician both depend on AI that can take action. That changes the engineering standard.

For a monitoring platform, an agent may need to inspect uptime events, summarize anomalies, route alerts, or trigger workflow steps. For an inbound automation platform, an agent may need to answer customers, draft replies, update records, call business systems, and execute automations. In both cases, the agent is not just generating text. It is operating near real business infrastructure.

That raised a set of product questions that belonged outside any single app:

- Which tools should this agent be allowed to call?

- Should this action execute immediately, require approval, or be blocked?

- What happened before and after a tool call?

- Did the agent expose sensitive data, follow policy, or drift from expected behavior?

- Can we replay or evaluate this behavior later?

BRAM is the answer to those questions. It gives the agent stack a dedicated governance and evaluation layer, instead of burying that logic inside each individual product.

Gateway Model

BRAM acts as the boundary between agent reasoning and external action.

Agents connect to BRAM as their MCP endpoint. BRAM then manages the relationship between those agents and the upstream MCP servers they need. That makes the gateway the place to enforce rules, capture traces, run guardrails, and produce an audit trail across the full agent-tool lifecycle.

The public site describes the product around three pillars:

- Policy Engine: decide what every agent can do once, centrally

- Observability: see every call and search every decision

- Guardrails & Evals: combine live guardrails with scheduled evaluations

That combination is important. Inline guardrails are for live protection on the request path. Evals are for learning from captured behavior over time, improving policies, and understanding whether agents are performing work the way the business expects.

Result

BRAM turns MCP from a collection of direct tool connections into an operational control layer for agentic products.

For the products I am building, that means Uptivus, Humagician, and future AI systems can share a consistent way to observe agent behavior, enforce policy, review sensitive actions, and evaluate outcomes. Instead of every agent integration becoming its own one-off risk surface, BRAM creates a gateway where agent traffic can be understood and governed.

The long-term goal is simple: make AI agents easier to ship responsibly. If an agent can call tools, access data, or trigger work, there should be a clear place to watch it, control it, and improve it.